News of the global advance of the Covid -19 epidemic, as of the varying fortunes of international and national responses to it, confronts many of us daily (see e.g. here). These accounts of what is happening are in turn based on data generated by national, international and transnational organisations, and illustrated in maps, graphs, tables and figures, as inputs of current reports or as models to predict the future. Commonly they are presented as ‘snapshots’, illustrating diachronic developments over time or synchronic differences between countries or other localities. Alternatively, they may be displayed on so-called dashboards that focus on daily bulletins, or even minute by minute changes, some of which allow for interaction with the user and offer the possibility of interrogating the data with respect to given places and times

There is little doubt that, after two years, many people are now suffering from an information overload. In addition, criticisms of the validity or utility of such data form part of the ideological battle with those who refuse to get vaccinated or object to specific restrictions (here and here). Even those who go along with the doxa recognise that the predictive value of this data has often been limited, both underestimating and overestimating the spread of the disease. And this is further complicated by the recent Omicron variant behaving differently from the Delta type that proceeded it. Recognition of these problems means that there are increasing calls to change what gets included in the data; in Italy, for example, consideration is being given to counting only those exhibiting Covid symptoms, not those who test positive without them. In the UK, the idea has even been mooted to stop communicating such statistics because people get ‘addicted to them’.

What are the implications of the battle against Covid -19 for students of comparative law and comparative social policy? Most obviously, there is the challenge of how best to explain similarities and differences in the making and application of laws, rules, recommendations and nudges etc. in different jurisdictions and assess the relative ‘success’ of their policy choices. We also have a rare opportunity to investigate what worldwide patterns of compliance and resistance to these messages can tell us about the legitimacy of law, respect for expertise and so on. There is, for example, evidence of unexpectedly higher levels of resistance to Covid based restrictions in places like Sweden, the Netherlands or Germany, as compared to some countries in Southern Europe which have often been seen as less inclined to be law -abiding.

Most commentators also agree that the response to the Covid -19 pandemic provides all too clear evidence for the claim that current global challenges that need coordinated handling are instead being met by fragmented and inconsistent responses. It appears to be the case that the nation state, or at least certain nation- states, have re-emerged as central players, at the expense of international and transnational organisations. Certainly little seems to have been done to ensure that help is sent where most needed. Allegedly, manufacturers have instead been more guided by their need and concern for profits, and richer countries have been accused of hoarding vaccines -even where it would be in their own interest to share them out.

But comparativists may also want to give attention to the role of information in all this. Did efforts to share data about the behaviour of the virus and what is happening during the pandemic help produce the basis for more global solidarity? Or was it as much part of the problem as of any solution? These questions are discussed in a variety of contributions to a recent special issue of the International Journal of Law in Context, on ‘Numbers in an emergency’ in the COVID-19 crisis, that I edited together with Mathias Siems. My contribution there discusses Covid data (henceforth ‘Covid indicators’) as a case -study of global social indicators. It fits into my long-standing interest in working out what is involved in doing research on ‘comparison as a social practice’. In this ‘second-order’ form of comparison, the aim is to try to make sense of how others compare, rather than to do comparison in the first person, thereby learning more about the ways comparisons are used in the world (see e.g. here, here, here, and here).

The starting point here is the variety of Covid indicators that have been on offer. Some, focusing on raw data as collected locally, report on the progress of the epidemic, noting changing infection and fatality rates (e.g. World Health Organization, here, here, here, and here). Others, such as those comparing the health policies of different countries, sought to show which of these worked best for limiting the spread of the virus (here, here, here, here, and here). These COVID indicators are then employed for a variety of purposes. In particular, they serve as key metrics used by governments, administrators, and citizens for such aims as predicting the future, and coordinating and legitimating decision -making.

As Sally Merry and her colleagues have explained, global social indicators do not merely circulate information, but have both ‘truth effects’ and ‘governance effects’. Here the ever- updated information in indicators, comparing the relative success of a given country in dealing with the virus over time, was intended to show and justify the need for preventive action. Like other global indicators, Covid indicators, too, simultaneously purport both to compare performance as shown by the spread of Covid and also to standardise what counts as good performance and move those being assessed towards better compliance with such a standard. What interests me (as it interested Merry) is the way these purposes can create tension with the supposed guiding principle of successful comparison which involves ‘comparing like with like’ (or, as the saying goes, not ‘comparing apples with oranges’). Where, as here, the practical point of a comparative exercise has more to do with commensuration against a common standard, through ranking, coordinating and standardising, it is understandable that more importance is given to judging places on the basis that they should be alike than explaining the reasons for their differences, and ensuring that only like places are compared.

Indeed it may be positively counter- productive to insist on only ‘comparing like with like’. Telling us that Sweden is a place with relatively little ‘corruption’, whilst Somalia suffers from a great deal, in no way depends on the comparison being ‘fair’. On the contrary, the many factors that help explain why Somalia is more likely to have problems with ‘corruption’ only reinforces the credibility of the ranking. The same applies to the argument that the relevant actors in Somalia might see ‘corruption’, as it is defined in Sweden, as less salient for them.

In the case of Covid indicators, communications about how the epidemic was spreading typically made no allowance for differences in the places being assessed in terms of the risks they were exposed to, or the resources they had available to respond. Little allowance was made for even well- known differences in the goals that countries were trying to achieve, as when giving different weight to damage to the economy, or to political liberty and freedom of movement. From some points of view, and for some purposes, this makes sense. Think for example of business people or tourists wanting to know the extent of threat to them posed by the disease in different potential destinations. On the other hand, it would seem that any serious effort to compare the success of governments in handling the epidemic would need to take into account relevant similarities and differences in the nature of the challenge being faced and the resources available to deal with it.

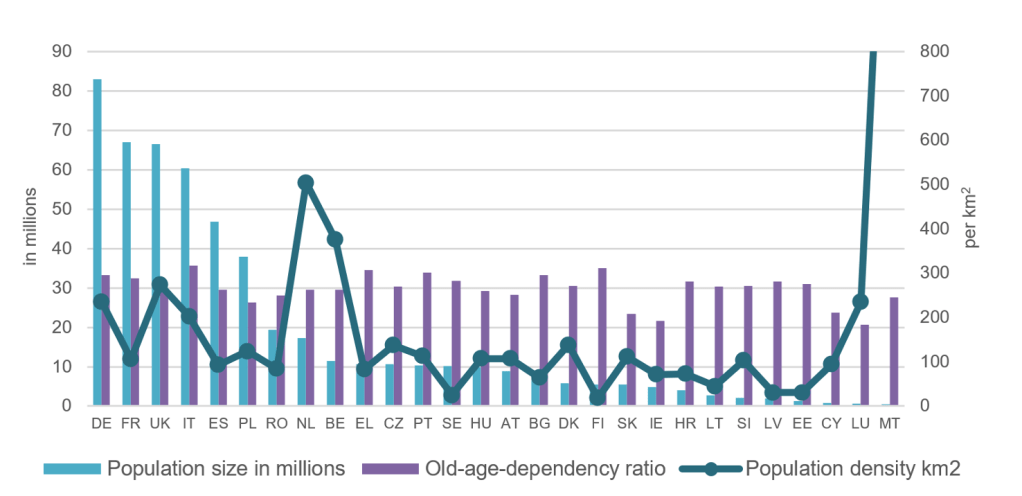

To some extent this is recognised by those who try to ‘control’ for differences such as the age or density of the population at risk (see Figure 1, taken, with permission, from Hantrais and Letablier, Comparing and Contrasting the Impact of the COVID-19 Pandemic in the European Union, Routledge, 2021, p. 4.)

If our main interest is in which places are doing better, and are therefore safer, we may be unconcerned to ‘control’ for differences. But if we want to decide if given policies that have been adopted have been really successful (and therefore to be copied) we need to be certain we are ‘comparing like with like’ in terms of the difficulty of the challenge. But if that is the reason, why refer to only a few of the many factors that could potentially be relevant and not include others – for example the greater difficulty of organising lockdowns in poorer countries where workers and families cannot afford to stay at home, or do not have homes that are suitable?

Attempts to use Covid indicators both as measures of the current level of threat of the disease and as yardsticks of achievement and performance in responding to it can mean that comparison and commensuration can become confused – with potentially life and death consequences. In her last book, Merry suggested that the answer to producing fairer comparisons using global social indicators, for example in studies of compliance with human rights, lies in producing better qualitative research so as to ensure that comparisons are fully faithful to the contexts being juxtaposed. But, the problem, it seems to me, cannot be ‘solved’ in this way because it derives more from the variety of potentially conflicting purposes for which comparison as a social practice can be undertaken. More fundamentally, case-studies of global social indicators in general, and Covid indicators in particular, may help us see why, taken to its logical conclusion, the search for comparing only ‘like with like’ can make comparison for all practical purposes impossible.

Posted by Professor David Nelken (Professor of Comparative and Transnational Law, Dickson Poon Law School, King’s College, London)

1 Comment

Comments are closed.